The Memory Revolution: Why AI That Remembers Is a Different Game Altogether

The Memory Revolution: Why AI That Remembers Is a Different Game Altogether

Persistent AI memory isn't a feature — it's a different paradigm. Here's what's changing, why it matters, and what to do about it in the next six months.

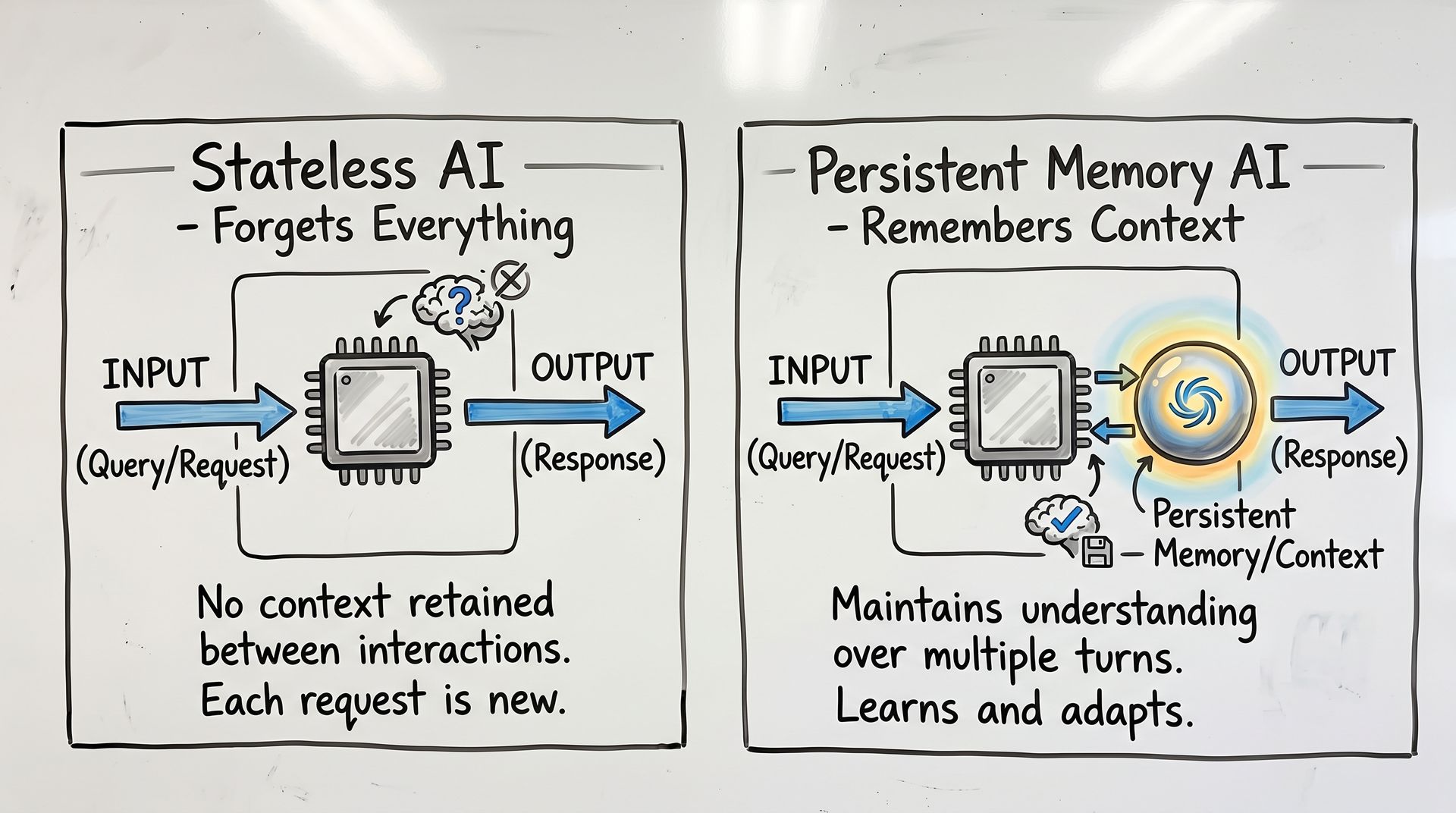

There's a version of AI that forgets everything the moment the conversation ends. You start fresh every session, re-explain context, re-establish preferences, re-walk ground you already covered. Useful, but ultimately a very sophisticated autocomplete.

Then there's a version that's emerging now — AI that remembers. Not in the way a search history remembers what you clicked. In the way a sharp colleague remembers what you said you'd do, what your business cares about, where you've been burned before, and what "good" actually looks like for your specific situation.

That's the shift I want to talk about today.

What's Actually Changing

The race in AI has been dominated by scale — bigger models, more parameters, longer context windows. But there's a quieter race happening that's arguably more consequential: teaching AI to build persistent representations of what it learns across interactions.

This isn't about storing chat logs. It's about something more structural. When an AI system has persistent memory of your business, it can do things that are genuinely different from stateless inference:

It knows your product catalog well enough to spot inconsistencies in real-time, not just answer questions about it. It understands your customers well enough to flag when a support ticket pattern suggests a product problem, not just respond to the ticket. It remembers your business rules well enough to apply them consistently across every interaction — without you re-explaining them each time.

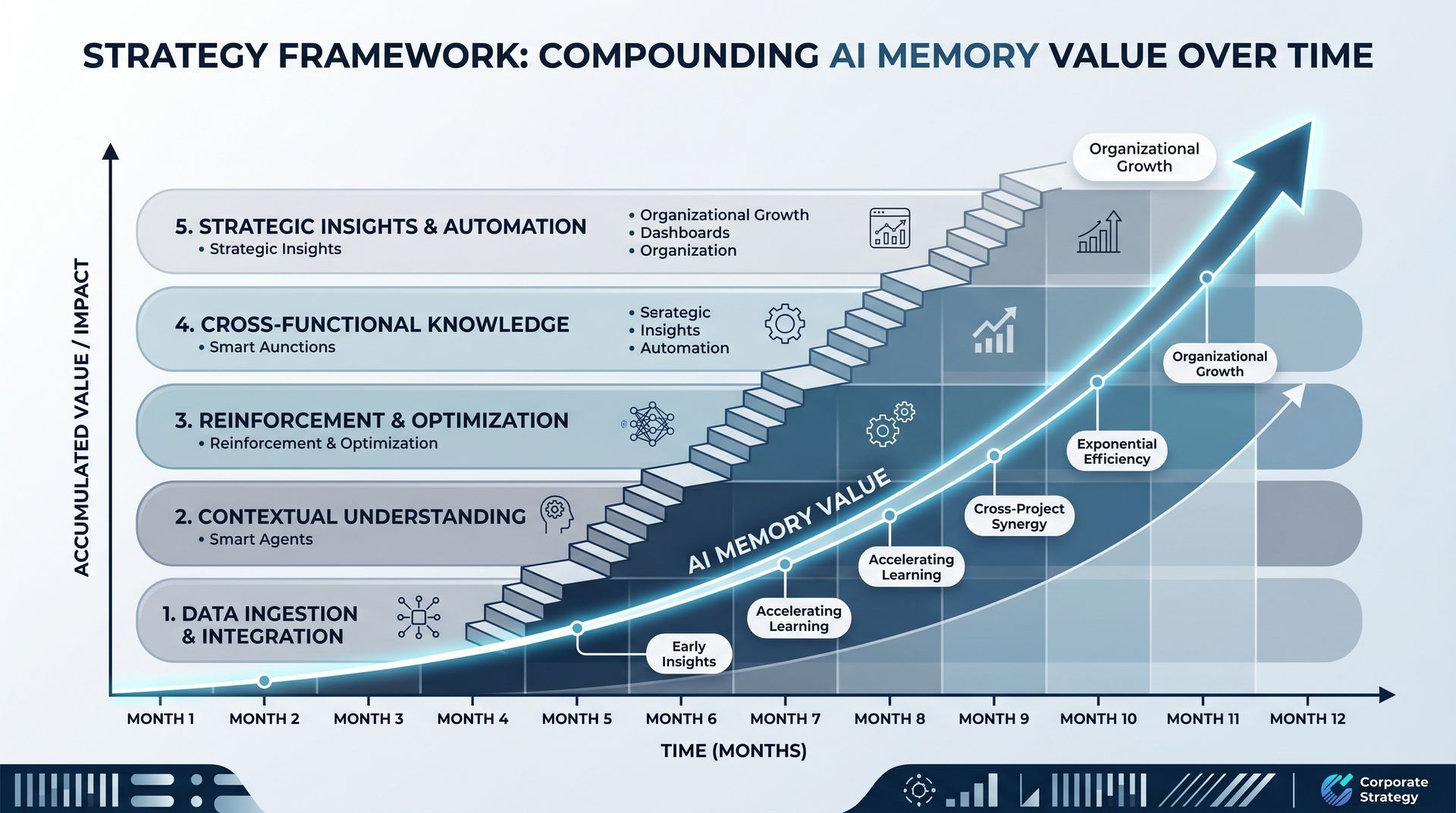

The practical version is already here in early form. Business AI tools that build persistent models of your operations. Productivity AI that tracks your priorities and follows up on things you committed to. Customer-facing AI that knows returning users and adapts accordingly. These aren't future states — they're shipping now, and they're going to get significantly more capable in the next 12 months.

Why This Changes the Value Calculation

Here's what most people miss about AI memory: it turns AI from a tool you use into an infrastructure you build on.

A stateless AI is like a contractor you bring in for a project. Useful for that project, but starts from zero every time. A persistent-memory AI is more like an employee — it accumulates institutional knowledge, gets better at working with you, and becomes more valuable the longer it's around.

This changes the economics significantly. The ROI on a persistent AI doesn't just come from what it does in a single interaction — it compounds over time. Six months in, an AI with good memory of your business is doing work that would take a human employee years to learn. And unlike a human, it doesn't leave, forget, or need to be re-trained.

This is why the companies building memory infrastructure now are making a bet that looks aggressive but might not be aggressive enough. They're essentially trying to lock in compounding advantages — the longer they operate, the smarter their systems get about their specific contexts in ways that are hard for competitors to replicate.

The Real Risks Nobody's Talking About

I want to be direct about something that doesn't get enough attention in the excitement around AI memory: this stuff is sensitive, and the security implications are serious.

When AI systems build persistent memories of your business, they're storing information that could be catastrophically valuable to competitors. Your business logic, your customer relationships, your operational weaknesses, your strategic decisions — all of that becomes data that lives somewhere, managed by some system, potentially vulnerable to breach.

The companies that get this wrong — that build memory systems without enterprise-grade access controls, audit trails, and data residency guarantees — are going to cause real damage. And the regulatory attention that's coming for AI systems with persistent memory is going to be significant. GDPR, CCPA, and their successors are going to have things to say about AI systems that remember everything about users indefinitely.

The organizations that will be ahead of this aren't the ones waiting for regulation to force their hand. They're the ones building privacy-by-design into memory systems now — giving users visibility and control over what AI remembers, implementing data minimization principles, treating memory like the liability it can be if mishandled.

What This Means for Your Business — The Practical Version

Not a blueprint for the next decade. A checklist for the next six months.

Start with what you'd want remembered.

Before you deploy any AI system with memory capabilities, do the thought exercise: if this AI remembered everything about every interaction it had with our business, what would we want it to know? More importantly, what would we absolutely not want it to know or remember? That boundary is where your governance work starts.

Treat memory infrastructure as a business asset, not an IT project.

The companies that will extract the most value from AI memory over time are the ones treating it as strategic infrastructure — with clear ownership, defined access policies, and explicit decisions about what gets remembered, what gets forgotten, and on what timeline.

Pilot with high-value, well-bounded use cases.

The best starting points for AI memory aren't the most impressive demos. They're the workflows where consistent application of institutional knowledge is currently the bottleneck — compliance checking, customer intake processes, product configuration rules. The unglamorous places where "we've always done it this way and nobody can articulate why" is actually costing you.

Build the human review layer before you need it.

AI memory systems will make errors — they'll remember things incorrectly, infer patterns that aren't real, build models that drift from reality over time. The organizations that will use AI memory safely are the ones that built feedback mechanisms and review processes from the beginning, not as an afterthought when something goes wrong.

The Bottom Line

The shift from stateless to persistent AI isn't just a technical upgrade. It's a fundamental change in what AI can do for a business — and in what responsibilities come with deploying it.

The window for getting this right is shorter than it feels. Memory-based AI systems are moving from early experiment to infrastructure quickly. The organizations that build the governance, security, and feedback infrastructure now will be able to deploy aggressively later. The ones that wait until the technology is more mature will be playing catch-up in a space where the advantages compound fast.

The question isn't whether AI memory will become standard. It's whether you'll be building the systems that do it responsibly and strategically — or scrambling to secure and govern systems that already know too much about you.

Recent Posts