The Agentic Era: Why Your Next Best Employee Might Not Be Human

The Agentic Era: Why Your Next Best Employee Might Not Be Human

There's a version of AI adoption that gets a lot of press: the flashy demos, the viral clips, the "AI just did X" posts. Then there's the version actually reshaping businesses - quieter, less photogenic, and a lot more consequential.

What's Actually Changing

Six months ago, most AI tools you encountered were request-response machines. You prompt, it generates, you evaluate. Useful for drafting an email, brainstorming a name, getting a first-pass summary of something long. But you were always in the loop. The AI was a tool you used.

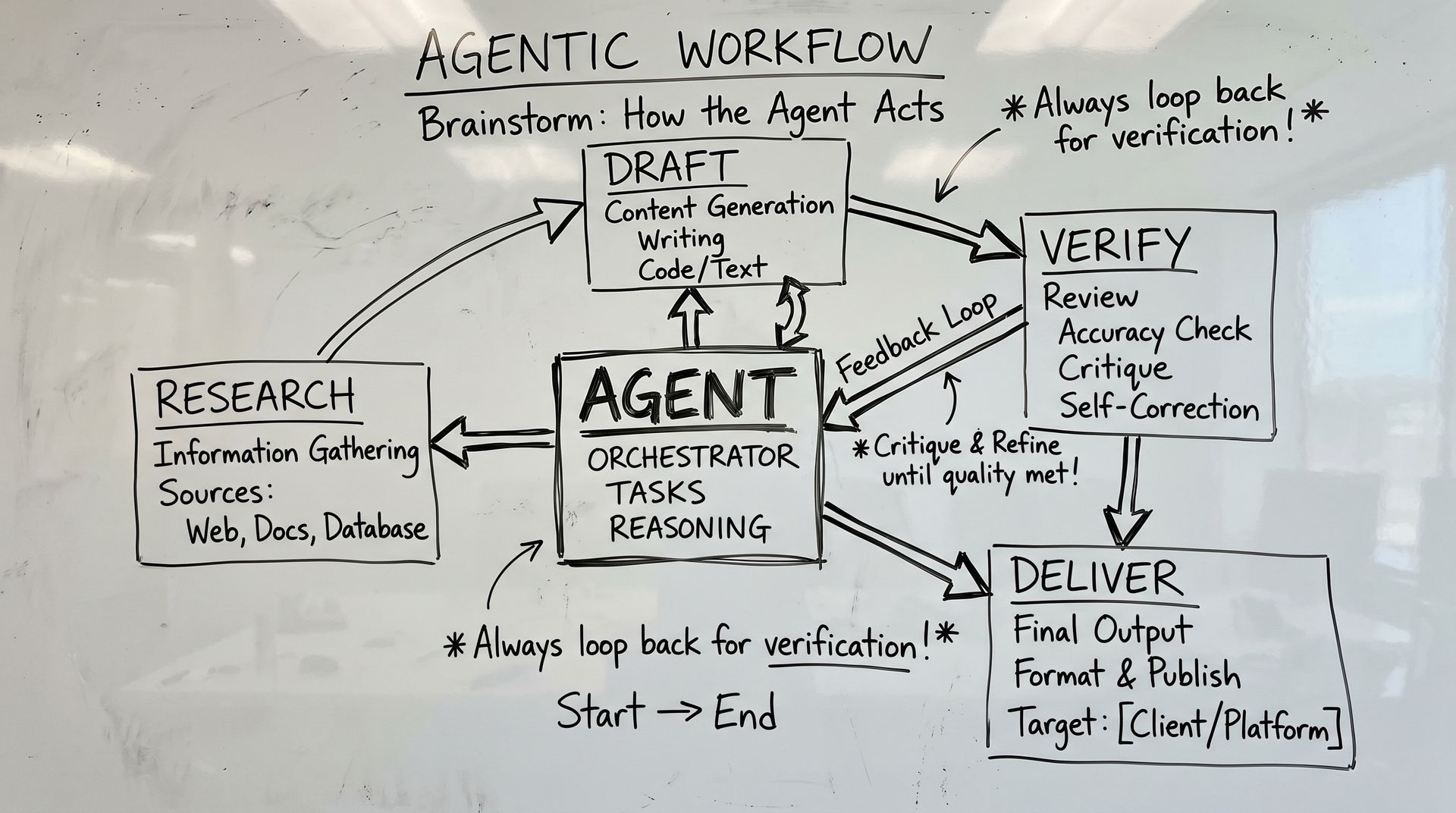

What's emerging now is fundamentally different. Agentic AI systems can plan a sequence of steps toward a goal, use tools, loop through results, correct themselves, and hand you something finished rather than something started. They don't just answer questions -- they take actions.

The practical version of this isn't a robot with a face. It's software that can research a topic, compare three competitors' pricing pages, pull your internal data, draft a recommendation, flag the risks, and send you a concise brief -- all before you've finished your first coffee. And it can do it again at 2am, consistently, without getting tired.

The Hype vs. The Reality

Let me be precise about where we actually are, because there's a gap between the demo floor and the deployment reality that matters.

Agentic tools are genuinely capable for well-bounded, repeatable workflows. The kind of work where you could theoretically write a procedure manual -- research, compile, format, check, send -- that kind of task is now automatable in ways that weren't reliable 18 months ago. Not theoretical. Not experimental. Real deployments, real cost reduction, real time savings.

But the moment you step outside well-defined workflows, agentic AI still breaks in predictable ways. It will confidently pursue the wrong goal. It will make assumptions that a human would catch in about four seconds. It will optimize for the wrong metric if the metric wasn't specified precisely enough. These aren't edge cases -- they're the current ceiling.

The companies winning with agentic AI right now understood something counterintuitive: the way to get more value from a more powerful AI is to be more precise about what you actually want. The AI doesn't make vague useful. It makes precise powerful. That's a fundamentally different design philosophy than "prompt and hope."

The Real Competitive Question

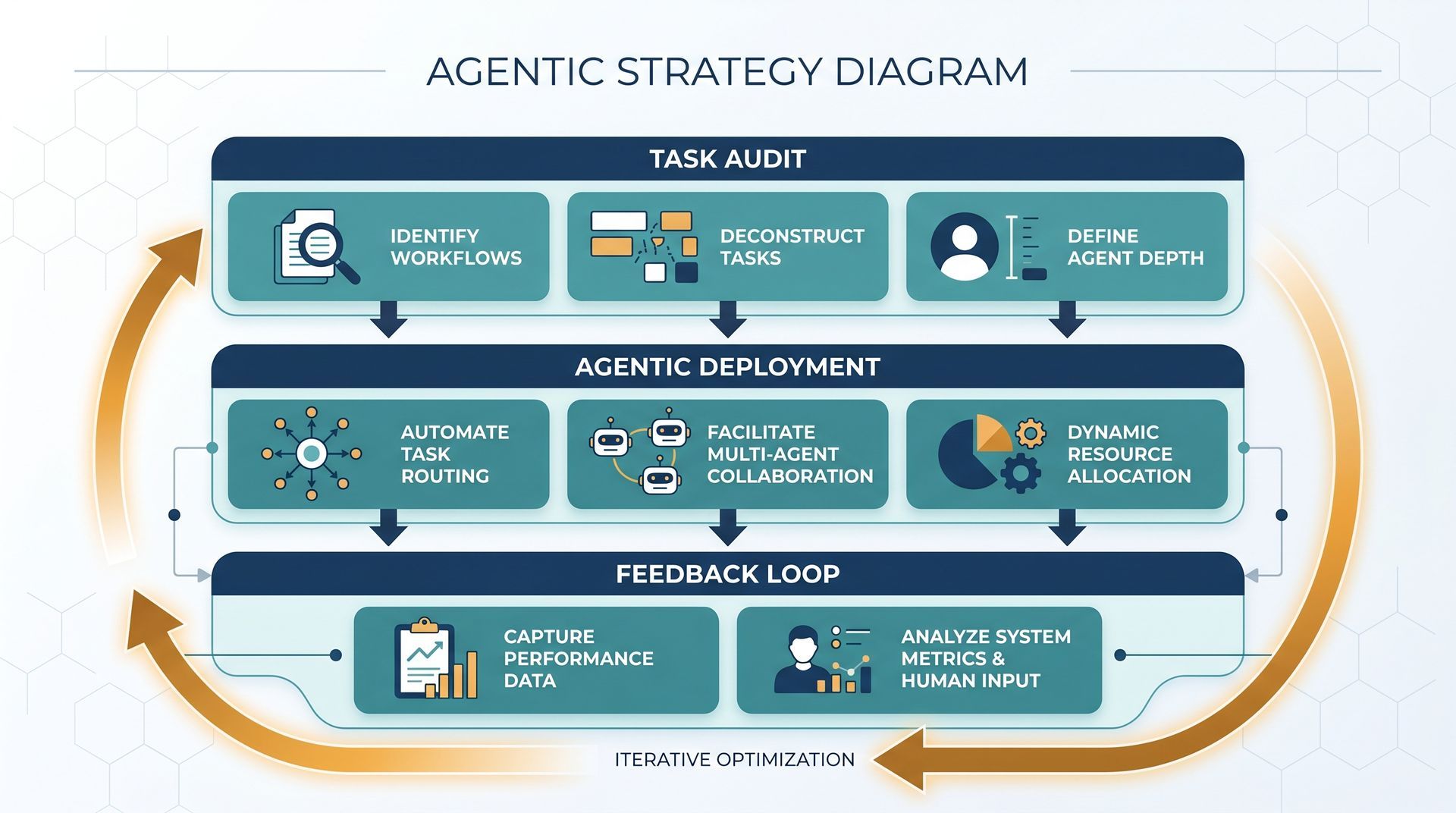

Here's the angle that isn't getting enough attention. The competitive advantage from AI isn't coming from who has the best model -- that's commoditizing fast. It's coming from who has the best workflow design: the clearest thinking about what your business actually needs, translated into precise enough instructions that an AI can execute reliably.

That's a much harder thing to build than it sounds. It requires understanding your business deeply enough to specify what good looks like in enough detail that a machine can operationalize it. Most organizations haven't done that work -- they've been running on informal knowledge, tribal instincts, and "we know it when we see it" quality standards. That works fine when humans are in the loop. It falls apart when the loop is AI.

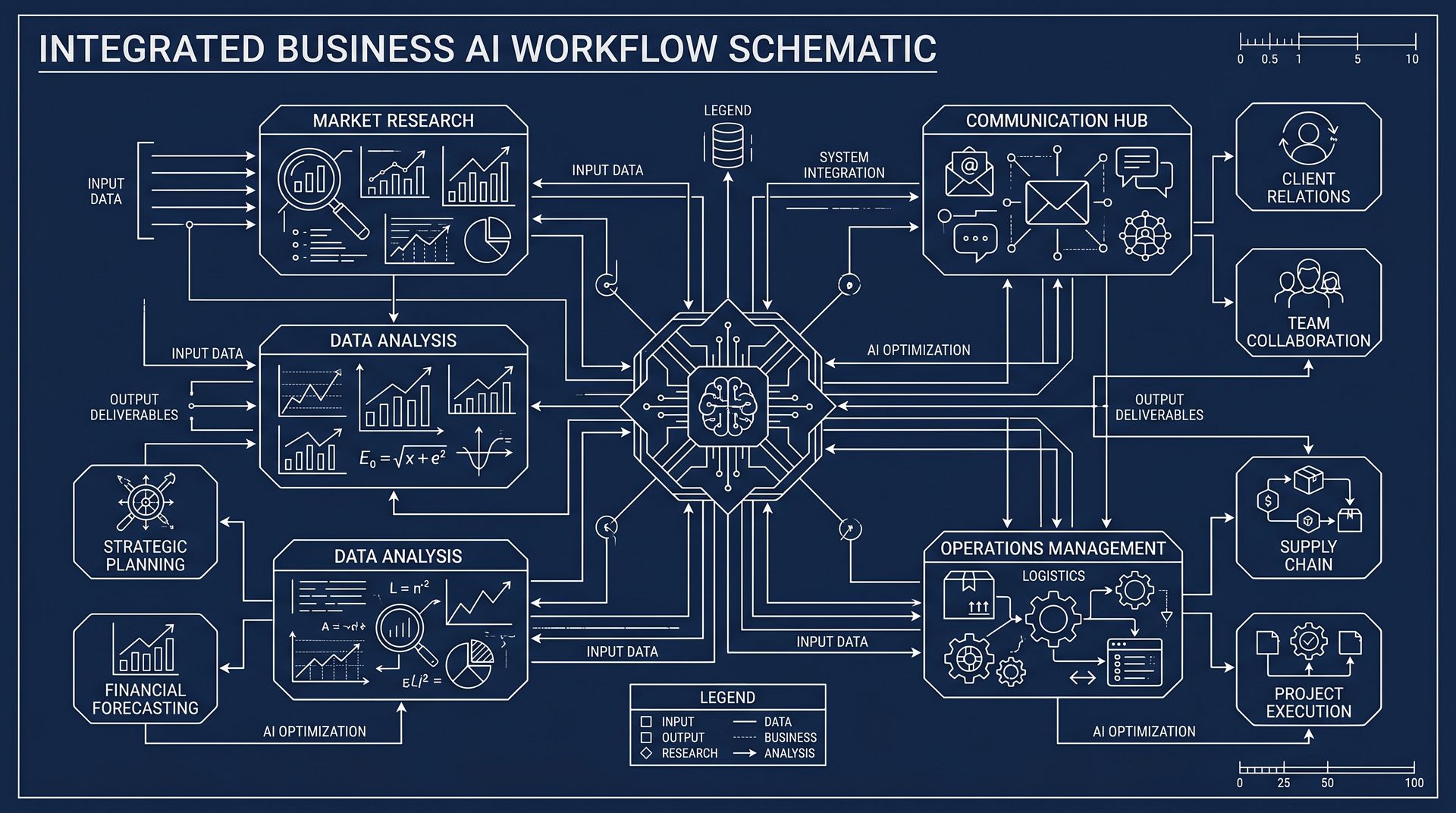

This is why the companies that will look brilliant in three years are spending this year on process architecture -- mapping workflows in detail, defining what outputs actually matter, building feedback loops that let AI improve over time. They're not just "adopting AI." They're doing the hard work of making their business legible to a machine.

What You Should Be Doing Right Now

Practical framework -- not a roadmap for the next decade, but a checklist for the next six months.

Audit the work, not the tech

List tasks your team does repeatedly that require a human in the loop. For each one, ask: could a new employee do this reliably from written instructions alone? If yes, it's a candidate for agentic automation. If not, start with documentation.

Start with the boring wins

The highest-value agentic use cases right now aren't impressive in a demo -- they're the tasks eating up enormous time for skilled people: competitive research, first-pass code review, data reconciliation, meeting summaries. That's where ROI is clearest and fastest.

Build feedback loops from day one

Agentic systems improve with feedback. Start collecting signals now -- what worked, what failed, where does the AI drift, what does human review catch? Design the interaction so each side does what it does best.

Protect against failure modes explicitly

Every agentic workflow needs a defined escalation path -- what happens when the AI hits uncertainty, produces a plausible-but-wrong result, or encounters an out-of-bounds situation? Build this in from the start.

The Bottom Line

Agentic AI isn't a future state. It's an emerging present. The tools are real, the capabilities are advancing fast, and the organizations deploying them thoughtfully right now are building compounding advantages that will be very difficult to replicate later. The window for getting this right -- building workflow design sophistication, feedback infrastructure, and institutional knowledge documentation -- is probably 12 to 24 months. The question isn't whether agentic AI will reshape your industry. It's whether you'll be the one shaping how it happens, or the one scrambling to catch up.

Recent Posts